Washington is abuzz with talk of how the Trump administration might try to reform U.S. foreign assistance programs (see here and here). If they want to find legislative allies and avoid inter-agency gridlock, focusing more internal resources on evaluation—in particular, rigorous impact evaluations—is a logical place to start.

Projects that undergo impact evaluations tend to perform better than projects that do not. But bilateral and multilateral development agencies give them widely varying levels of priority: USAID conducts roughly 2 impact evaluations per year, while the World Bank undertakes 53. Within the U.S. Government, the Millennium Challenge Corporation (MCC) is a leader in this regard—rigorous impact evaluation is baked into its institutional DNA.

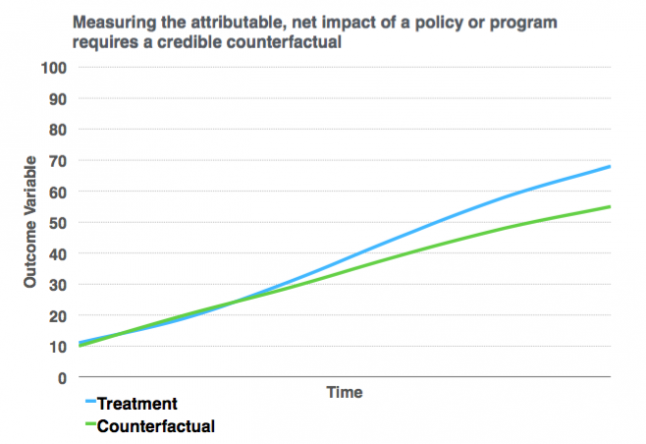

Aid agencies and development banks are already relatively good at producing performance evaluations, which measure the extent to which projects generate their expected outputs. But performance evaluations do not establish causality: that the change in a development outcome is actually attributable to a particular project or intervention, as opposed to other factors. They do not confront the counterfactual question of what would have happened in the absence of the project or intervention (see Figure 1). This is the unique contribution of impact evaluation.

Figure 1: Treatment and Counterfactual

By way of illustration, consider a recent recent geospatial impact evaluation (GIE) of 202 projects supported by the Global Environment Facility (GEF) to slow, halt, or reverse land degradation. AidData and the Independent Evaluation Office of the GEF used sub-nationally geocoded project data and satellite-based measures of land cover change and “greenness” (vegetation density) to compare GEF project areas to an otherwise similar set of geographical areas that did not receive GEF support. This quasi-experimental method made it possible to estimate the net, attributable effects of a portfolio of projects that were designed to combat land degradation. The results from this GIE indicate that this portfolio of GEF projects produced significant conservation benefits, sequestering on average 43.5 tons of carbon per hectare. That amounts to roughly 108,800 tons of carbon at each GEF-funded intervention site.

Establishing estimates of programmatic impact also makes it possible to conduct a value-for-money analysis, which first translates estimates of impact into monetary values and then nets out programmatic costs. We found that each of these GEF projects generated roughly $7.5 million USD in conservation benefits, while the average cost of each project was $4.2 million USD. That’s a 78.5% return-on-investment.

Despite the fact that impact evaluations can shed much-needed light on where limited development dollars should be focused, many aid agencies and development banks continue to underinvest in this type of rigorous evidence. For example, a new study shows that USAID conducted 601 performance evaluations between 2011 and 2014, but only 8 impact evaluations over the same period of time.

Greater investment in impact evaluation would not only help determine which types of projects deliver the best results, but also reassure American taxpayers that certain types of foreign aid provide good value-for-money. This is one of the main points that the outgoing USAID Administrator, Gayle Smith, underscored in a transition memo that she drafted for her successor’s consideration.

There are also some early signs that the prospect of getting better value-for-money through impact evaluation has bipartisan appeal. When recently pressed on the future direction of U.S. foreign assistance under the Trump administration, incoming Secretary of State Rex Tillerson singled out the MCC’s unique model for praise, noting that it was particularly well-positioned to achieve measurable results.